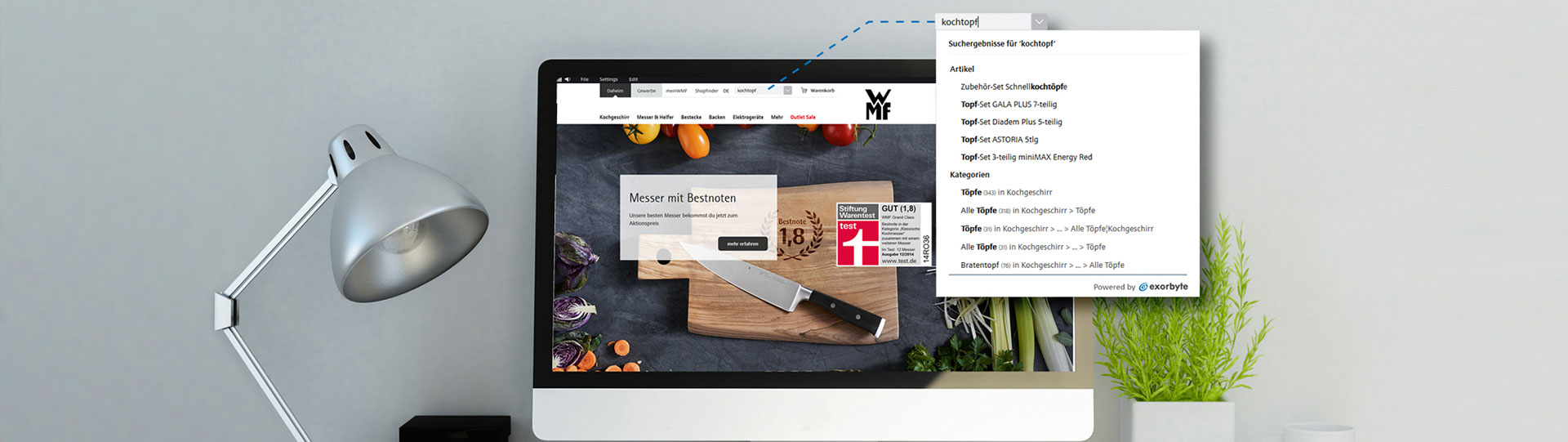

E-COMMERCE SUCHE & SHOP NAVIGATION

So finden Ihre Besucher im Online-Shop einfach und schnell das passende Produkt.

TOP FEATURES FÜR TOP SHOPS

Qualitative Suche, fehlertolerantes Suggest, Kampagnentool und Analytics-Funktion sind der Garant für hohe Conversions.

>>

ÜBER 500 SHOPS SETZEN AUF ECS

KOMPATIBEL MIT ALLEN SHOPSYSTEMEN

EINFACHE INTEGRATION